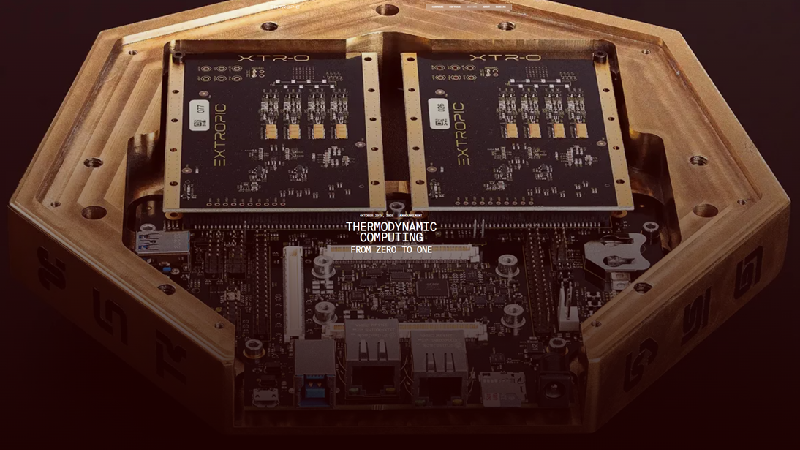

Extropic has unveiled its new thermodynamic sampling units (TSU), a novel AI chip design inspired by statistical physics rather than classical transistor logic. Instead of running deterministic step-by-step instructions like GPUs, TSUs generate probable solutions directly—offering enormous efficiency gains by trading off precision for energy savings. According to Extropic, the hardware can run AI workloads using 10,000× less energy than current-generation accelerators. Full write-up: Thermodynamic Computing: From Zero to One.

Why it matters: For years, Verdon has argued that the future of AI hardware lies in embracing thermodynamic principles—the same ones that govern energy, entropy, and randomness in nature. Extropic’s TSU announcement marks the first tangible demonstration of that vision. If validated at production scale, such hardware could revolutionize the economics of model training and deployment, especially as global energy constraints tighten. The question now is whether this bold physics-driven approach can move from research curiosity to mainstream production and challenge the GPU hegemony of Nvidia and AMD. Learn more at extropic.ai.