Meta’s FAIR research arm just

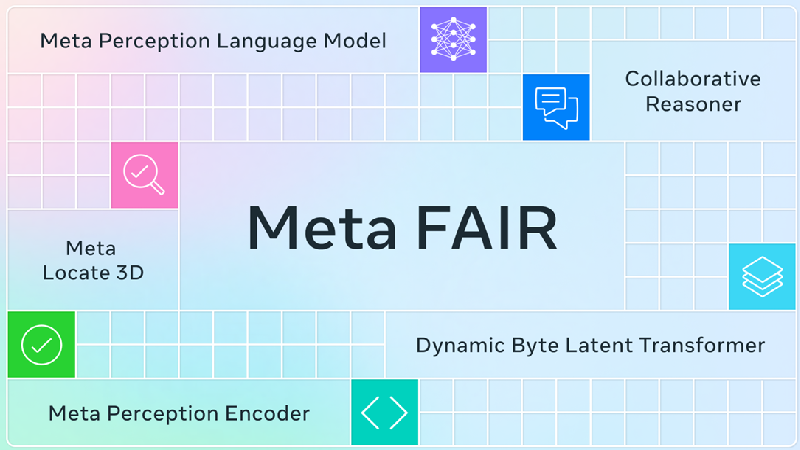

published five new open-source AI research projects focused on perception and reasoning, showcasing advances in computer vision, 3D understanding, and collaborative AI capabilities.

Perception Encoder shows SOTA performance in visual understanding, excelling at tasks like ID’ing camouflaged animals or tracking movements. Meta also introduced the open-source Meta Perception Language Model (PLM) and a PLM-VideoBench benchmark, focusing on video understanding.

Locate 3D enables precise object understanding for AI, with Meta publishing a dataset of 130,000 spatial language annotations for training. Finally, a new Collaborative Reasoner framework tests how well AI systems work together, showing nearly 30% better performance vs. working alone.

This research batch focuses on AI building blocks like perception, 3D understanding, and reasoning — key steps toward more capable embodied agents and machine intelligence. We’re officially crossing into new territory, with systems that can finally understand and interact with the physical world in advanced ways.